Your developers are about to manage 30 AI agents writing code simultaneously. Is your executive team ready for what that means?

On 1 January 2026, Steve Yegge, former Amazon and Google engineer and author of the Vibe Coding book, released Gas Town: an orchestrator that lets a single developer manage 10, 20, even 30 parallel AI coding agents, all generating production code at machine speed.

One person. Thirty autonomous coders. Thousands of commits per week. This is not theoretical. It is working today.

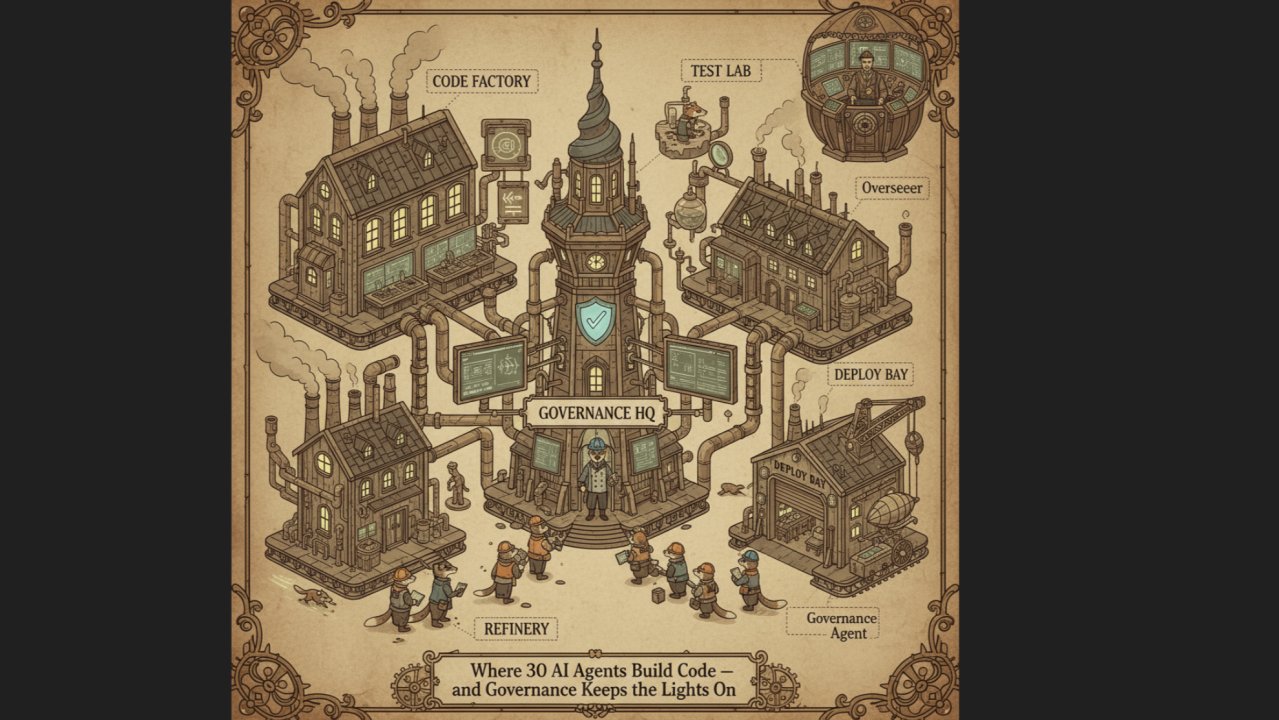

Before the governance implications, take 20 minutes and read Yegge's original post (linked below). His writing is spectacular: a brilliant blend of deep technical insight, genuinely funny prose, and AI-generated graphics that bring the whole concept to life. It is a masterclass in what happens when someone combines serious thought leadership with AI tools across the board, some to code, some to write, some to create visuals. The future of professional output in a single blog post.

The opportunity is enormous.

Gas Town is early and experimental, but it represents where enterprise software development is heading. Anthropic, OpenAI, and Google are all building agentic coding tools. The trajectory from 'developer using Copilot' to 'developer orchestrating 30 AI agents' is shorter than most executive teams realise.

Consider a story every Australian knows.

The Bureau of Meteorology's website redesign cost $96.5 million, ballooning from an original front-end estimate of $4.1 million. Years of development involving major global consultancies. The result was a site so poorly received that the Bureau had to reinstate the old one within days of launch.

Now imagine an alternative. A small team of three or four orchestration specialists managing fleets of AI coding agents. The full lifecycle, business analysis, development, testing, deployment, delivered in months rather than years, at a fraction of the cost. If it does not work, you have risked $5 or $10 million, not $96 million. If it does work, you have transformed your delivery model permanently.

The economics of software delivery are about to shift fundamentally.

Opportunity without governance is just risk by another name.

This is not a problem you can delegate to your CIO or CISO alone. They will be essential to solving it, but the strategic implications, cost structures, delivery timelines, liability, workforce planning, land squarely across the executive team and ultimately at the board table.

When AI agents are writing, reviewing, and merging code autonomously, the questions span the C-suite.

Which agent wrote which code, and what is your audit trail when something goes wrong? Can your change management controls operate at machine speed, or do they become theatre? Is the agent writing the code the same one approving the merge? Are your quality gates, static analysis, dependency scanning, secrets detection, embedded in the pipeline, or bolted on afterwards?

This is not a 2030 problem. It is a 2026 leadership conversation.

Start the conversation now.

The developers in your organisation are already experimenting with AI coding agents. Your development leads know this is coming even if it has not reached the executive floor yet.

The organisations that build governance into agentic development from the start will have a genuine advantage over those retrofitting controls after the fact.

The code is already being written. The question is whether your leadership team is shaping how it happens.

For more on how my thinking on agentic AI developed from here, read: My AI Governance Journey: From Sceptic to Practitioner

A note on how I work.

I use AI. Deliberately, and without apology.

Every insight, argument, and position in this post is mine: drawn from 20+ years of governance practice, client work, and hard-won experience in boardrooms and organisations across Australia. The ideas, the judgements, the professional reputation behind them — that is entirely human.

What AI contributes is craft: language, structure, fact-checking, and the kind of editorial discipline that turns a practitioner's thinking into something worth reading. I bring the substance. AI helps me express it clearly.

This is a partnership, not a shortcut. I would not publish anything I could not defend in a boardroom without notes.

I believe transparency about AI use is itself a governance practice. So I will always tell you.